Why RSF's Press Freedom Index is flawed - and why it works

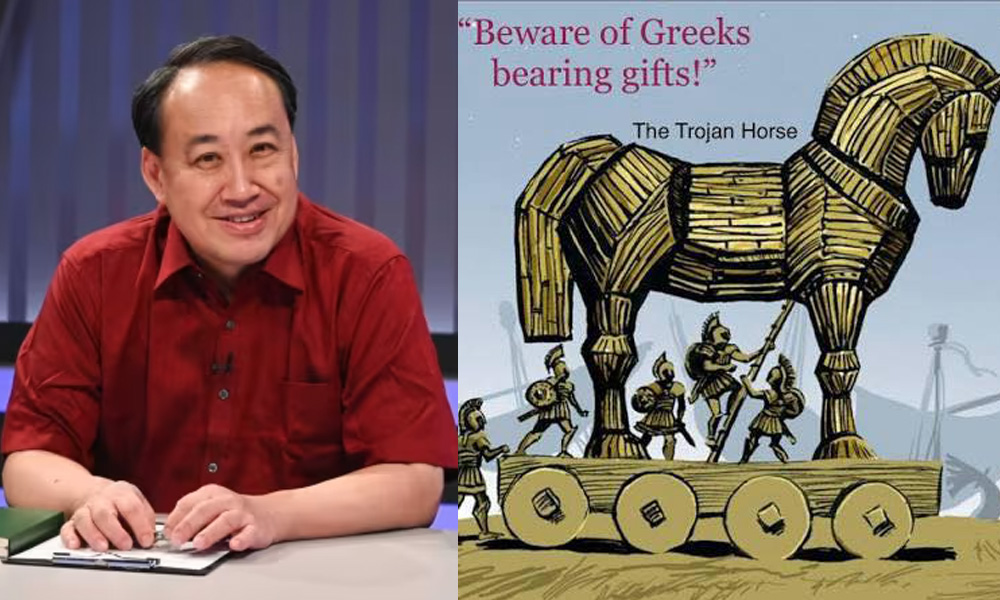

The weightage of each indicator is unknown. <strong>Cherian George</strong>

Originally published in journalism.sg. Reproduced with permission.

The Press Freedom Index of Reporters Without Borders (RSF) is once again making the news in Singapore, with law minister K Shanmugam dismissing the Republic’s low ranking as “quite absurd and divorced from reality” – even as he stated that the government’s approach has been to ignore criticisms that make no sense.

The Press Freedom Index of Reporters Without Borders (RSF) is once again making the news in Singapore, with law minister K Shanmugam dismissing the Republic’s low ranking as “quite absurd and divorced from reality” – even as he stated that the government’s approach has been to ignore criticisms that make no sense.

Indeed, the wonder is that the RSF rankings get so much airtime in Singapore ministers’ speeches. It is simply not deserved. On past occasions, ministers have referred to the rankings and then gone on to state that there is more to life than press freedom – without questioning the validity of the rankings in the first place. This time, at least, the law minister has questioned whether the rankings make sense. How, for example, could Guinea’s brutal junta rate higher than Singapore? (Another shocker: Indonesia lies at 100, below several Gulf states.)

The truth is that RSF’s Press Freedom Index is methodologically and conceptually flawed.

First, it lacks what researchers call “inter-coder reliability”. When surveying anything that’s subjective, you have to make sure that those taking the measurements (the coders) are all applying the same benchmarks. To the best of my knowledge, RSF does no such thing. Instead of using a common pool of rigorously trained assessors, it asks respondents within each country to rate that country on various indicators. Their responses help determine where the country ranks.

That’s like deciding the Miss World beauty pageant by comparing the scores given by the judges in each national competition. In such a system, if Miss Singapore judges gave the winner very high marks – perhaps because they were more easily impressed than, say, the Miss Venezuela competition judges – Miss Singapore could be Miss World faster than Ris Low could say “boomz”.

RSF’s method means that, at the end of the day, what it gives us is not really a “press freedom index” but a “national perception of press freedom index”. Actually, it’s not even that. Depending on who is asked to fill out the survey, it could really be “national press freedom monitors’ perceptions of press freedom index”. What we can conclude from Singapore’s low ranking is merely that whoever the respondents are in Singapore have a worse opinion of their country's press freedom than 132 other countries’ respondents have of theirs.

Note that it would be wrong to blame the respondents for the result. Even though the end product is an international comparison, that’s not what the respondents were asked for. Indeed, the ranking would probably be quite different if the local experts were shown a list of countries and simply asked, “Where do you think Singapore’s level of press freedom places it relative to these 174 other countries?” My guess is that such an approach would cause Singapore to leapfrog over dozens of states in the table and land somewhere in the 50-100 range: a middle-ranking and far-from-ideal state, but hardly an international pariah.

These, however, are little more than parlour games, because there is a more fundamental conceptual flaw in such rankings. When compiling an index, which is an aggregation of different indicators, the tricky part is what weights to attach to each separate indicator. Nobody has come up with a clear way to compare the different types of violation that are involved in the denial of press freedom.

For example, which is “worse”: (a) killing a journalist to stop a single story? or (b) giving broadcast licences to only a small number of commercial broadcasters who pump out entertainment, leading to a long-term denial of the public’s need to be informed through news and current affairs?

To Amnesty International, which exists to focus on individual human rights violations, (a) would certainly be worthy of attention, while (b) would probably not appear in its annual report. Yet, Article 19 of the Universal Declaration of Human Rights upholds the right of the public to receive information they need. If your main concern is the public’s right to know, (b) may be more worthy of attention than (a).

RSF just ignores this conceptual problem and does not declare the relative weights of different indicators in its index. But, if you read into the results, Singapore’s ranking must mean that RSF considers the Republic’s lack of alternative media and lawsuits against foreign media to be more serious than, say, torture and kidnapping of media workers and blocking of political websites (not practised here, but common in some countries that RSF places higher than Singapore in its rankings).

This problem is intractable, which is why more credible NGOs such as Amnesty International and Article19 don’t bother to create rankings or ratings. They write detailed reports on each country without attempting to make international comparisons.

Like many media-savvy organisations, RSF benefits (at least in the short term) from the publicity payoff of publishing annual rankings. Looking at its success in getting noticed in Singapore, who can say they are wrong?